AI-Powered Meeting Management Chatbot with Amazon Lex V2

In today's fast-paced business environment, managing meetings efficiently has become more critical than ever. Traditional scheduling systems often require multiple steps, complex interfaces, and significant manual intervention. What if we could simplify this process using conversational AI? This is exactly what we set out to achieve with Meety, a comprehensive meeting management application that combines the power of Amazon Lex V2 with a modern serverless architecture.

Meety represents a new approach to meeting management, where users can schedule meetings through natural language conversations while administrators maintain full control through a dedicated web interface. Built entirely on AWS serverless technologies, the application demonstrates how modern cloud services can create intuitive, scalable, and cost-effective solutions for everyday business challenges.

The Vision Behind Meety

The inspiration for Meety came from observing the friction in traditional meeting scheduling processes. Users typically need to navigate through multiple calendar interfaces, send numerous emails, and coordinate across different platforms. We envisioned a system where scheduling a meeting could be as simple as having a conversation with an intelligent assistant.

Our goal was to create an application that would serve two distinct user groups: end users who want to schedule meetings effortlessly through natural language, and administrators who need comprehensive tools to manage and approve these meeting requests. This dual-purpose approach required careful architectural planning to ensure both user experiences remained optimal while sharing the same underlying data and infrastructure.

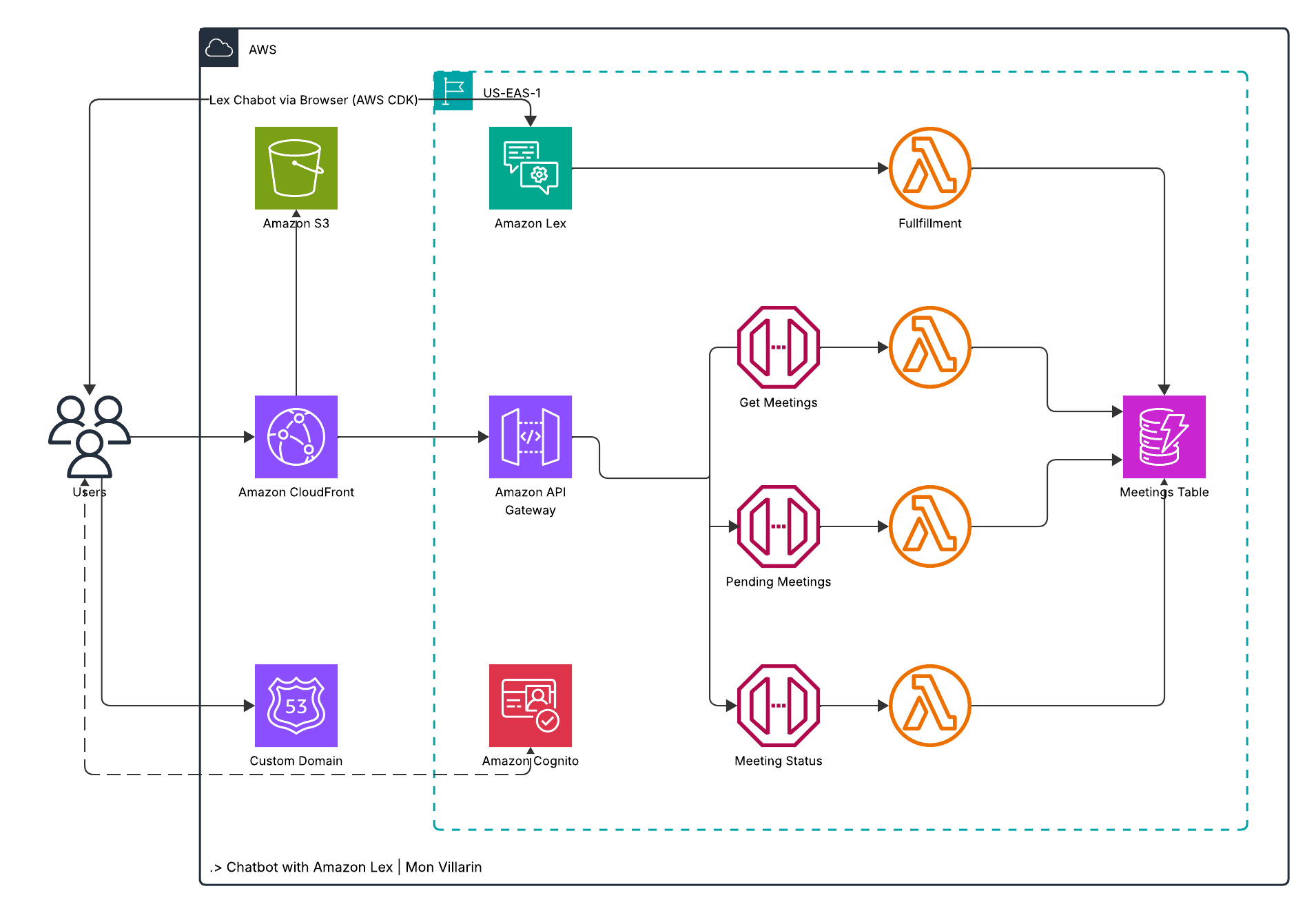

Architectural Philosophy: Serverless First

From the project's inception, we committed to a serverless-first architecture. This decision was driven by several key factors: cost efficiency, automatic scaling, reduced operational overhead, and the ability to focus on business logic rather than infrastructure management. Every component in Meety leverages managed AWS services, eliminating the need for server provisioning, patching, or capacity planning.

The serverless approach also aligned perfectly with the application's usage patterns. Meeting scheduling typically involves sporadic bursts of activity rather than consistent load, making serverless computing an ideal fit. Users might schedule multiple meetings in the morning and then not interact with the system for hours, a pattern that serverless architectures handle exceptionally well.

Core Technologies and Services

Amazon Lex V2: The Conversational Brain

At the heart of Meety lies Amazon Lex V2, AWS's advanced conversational AI service. Unlike traditional form-based interfaces, Lex V2 enables users to schedule meetings through natural language conversations. The service handles intent recognition, slot filling, and conversation flow management, creating an intuitive user experience that feels remarkably human-like.

We configured Lex V2 with three primary intents: StartMeety for initial greetings, MeetingAssistant for the core scheduling functionality, and FallbackIntent for handling unexpected inputs. The MeetingAssistant intent includes six carefully designed slots that collect essential meeting information: attendee name, meeting date, time, duration, email address, and final confirmation. This slot-based approach ensures all necessary information is gathered while maintaining conversational flow.

Amazon Cognito: Dual Authentication Strategy

Authentication in Meety employs a sophisticated dual-mode approach using Amazon Cognito. The system supports both anonymous access for chatbot interactions and authenticated access for administrative functions. This design decision ensures that anyone can schedule meetings without barriers while maintaining security for sensitive administrative operations.

The Cognito Identity Pool provides temporary AWS credentials for both authenticated and unauthenticated users, with carefully crafted IAM policies that grant appropriate permissions for each user type. Anonymous users can interact with Lex V2 directly, while authenticated administrators gain access to additional API endpoints for meeting management.

AWS Lambda: Serverless Computing Power

Four distinct Lambda functions power Meety's backend operations. The generative-lex-fulfillment function serves as the primary fulfillment handler for Lex V2, processing meeting scheduling requests and storing data in DynamoDB. Three additional functions handle administrative operations: get-meetings retrieves approved meetings within specified date ranges, get-pending-meetings fetches meetings awaiting approval, and change-meeting-status enables administrators to approve or reject meeting requests.

Each Lambda function is optimized for its specific purpose, with tailored IAM roles that follow the principle of least privilege. The functions are written in Python 3.12, leveraging the boto3 SDK for AWS service interactions and implementing comprehensive error handling and logging.

Amazon DynamoDB: Flexible Data Storage

Meeting data is stored in Amazon DynamoDB, chosen for its serverless nature, automatic scaling capabilities, and flexible schema design. The database uses a single table design with a Global Secondary Index on the status field, enabling efficient queries for both individual meetings and status-based filtering.

The DynamoDB table stores comprehensive meeting information including unique meeting IDs, attendee details, scheduling information, current status, and creation timestamps. This design supports both the conversational interface's need for quick data insertion and the administrative interface's requirements for complex queries and status updates.

Amazon S3 and CloudFront: Global Content Delivery

The frontend application is hosted on Amazon S3 with CloudFront distribution, providing global content delivery with minimal latency. This combination offers several advantages: automatic scaling to handle traffic spikes, built-in security features, and cost-effective hosting for static content.

CloudFront's integration with AWS Certificate Manager enables HTTPS encryption across all communications, while Origin Access Control ensures that S3 content is only accessible through the CloudFront distribution, enhancing security and performance.

Direct Lex Integration: A Technical Innovation

One of Meety's most significant technical innovations is the direct integration between the frontend and Amazon Lex V2. Rather than routing chatbot interactions through API Gateway and Lambda functions, the frontend communicates directly with Lex using the AWS SDK. This approach offers several compelling advantages.

First, it eliminates unnecessary network hops, reducing latency and improving user experience. Second, it simplifies the architecture by removing intermediate components that would otherwise require maintenance and monitoring. Third, it reduces costs by eliminating API Gateway charges for chatbot interactions, which can be substantial in high-volume scenarios.

The direct integration required careful consideration of authentication and security. We implemented this using Cognito Identity Pool's unauthenticated role, which provides temporary AWS credentials with permissions limited to Lex interactions. This approach maintains security while enabling seamless user experiences.

Infrastructure as Code with Terraform

Meety's entire infrastructure is defined using Terraform, embodying infrastructure as code principles. This approach provides several critical benefits: version control for infrastructure changes, reproducible deployments across environments, and the ability to tear down and recreate the entire stack when needed.

The Terraform configuration is organized into logical modules covering different aspects of the system: API Gateway and Lambda functions, Cognito authentication, DynamoDB storage, Lex bot configuration, and frontend hosting. This modular approach makes the infrastructure maintainable and allows for independent updates to different system components.

Environment-specific configurations are managed through Terraform variables, with sensitive values externalized to terraform.tfvars files that are excluded from version control. This pattern enables secure deployment across multiple environments while maintaining configuration flexibility.

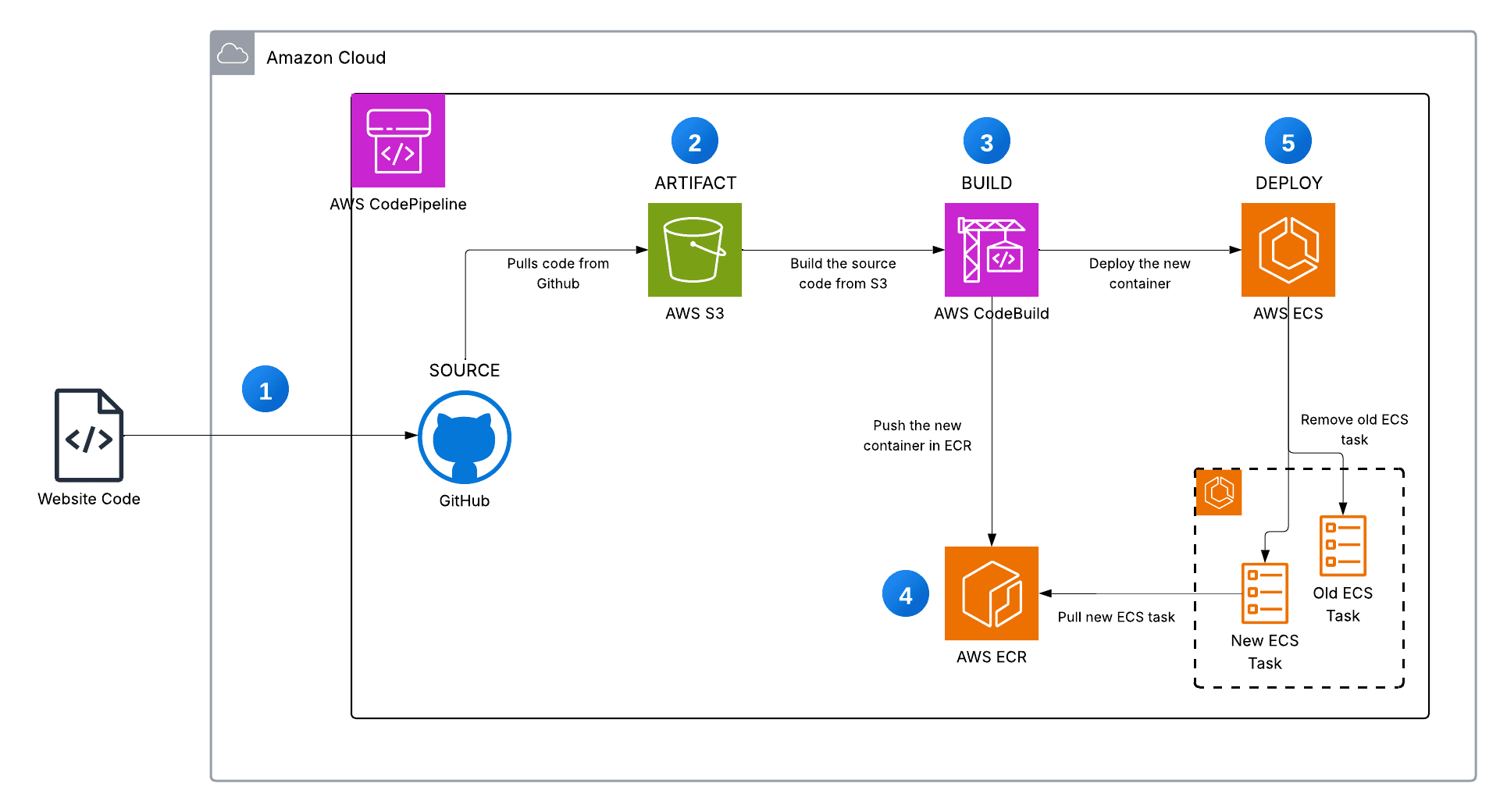

Automated Deployment Pipeline

Recognizing that complex multi-service applications can be challenging to deploy, we created a comprehensive automated deployment pipeline. The master deployment script orchestrates the entire process: building Lambda deployment packages, applying Terraform configurations, configuring Lex intents and slots, creating bot aliases, updating frontend configurations with actual resource IDs, and deploying static assets to S3.

This automation eliminates deployment complexity and reduces the potential for human error. A complete deployment, from empty AWS account to fully functional application, takes approximately 5-10 minutes and requires only a single command execution.

Security Considerations and Best Practices

Security was a primary consideration throughout Meety's development. The application implements multiple layers of security controls: IAM roles with minimal required permissions, JWT-based authentication for administrative functions, HTTPS encryption for all communications, and secure credential management through Cognito Identity Pools.

All Lambda functions include comprehensive input validation and error handling to prevent injection attacks and ensure graceful failure modes. DynamoDB access is restricted through IAM policies that limit operations to specific tables and indexes. The frontend implements Content Security Policy headers and other security best practices to protect against common web vulnerabilities.

Performance Optimization and Scalability

Meety's serverless architecture provides inherent scalability advantages, but we implemented additional optimizations to ensure optimal performance. Lambda functions are configured with appropriate memory allocations based on their computational requirements. DynamoDB uses on-demand billing mode, automatically scaling read and write capacity based on actual usage patterns.

The frontend leverages CloudFront's global edge network for content delivery, with appropriate caching headers to minimize origin requests. Static assets are optimized for size and compressed using modern compression algorithms. The direct Lex integration eliminates unnecessary API calls, reducing both latency and costs.

Lessons Learned and Future Enhancements

Building Meety provided valuable insights into serverless application development and conversational AI implementation. We learned the importance of careful slot design in Lex conversations, the benefits of direct service integration where appropriate, and the value of comprehensive automation in complex deployments.

Future enhancements could include integration with external calendar systems, email notifications for meeting confirmations, support for recurring meetings, and advanced analytics for meeting patterns. The serverless architecture provides a solid foundation for these additions without requiring fundamental changes to the existing system.

Conclusion

Meety demonstrates the power of combining conversational AI with modern serverless architectures to solve real-world business problems. By leveraging AWS's managed services and implementing thoughtful architectural patterns, we created a system that is both user-friendly and technically robust.

The project showcases how serverless technologies can reduce operational complexity while providing enterprise-grade scalability and security. The direct Lex integration pattern, comprehensive automation, and infrastructure as code approach provide a blueprint for similar applications.

Most importantly, Meety proves that sophisticated AI-powered applications are within reach of development teams willing to embrace cloud-native architectures and modern development practices. The combination of natural language processing, serverless computing, and thoughtful user experience design creates possibilities for reimagining how we interact with business applications.

As organizations continue to seek more intuitive and efficient ways to manage their operations, applications like Meety point toward a future where conversational interfaces become the norm rather than the exception. The serverless foundation ensures these applications can scale to meet growing demands while maintaining cost efficiency and operational simplicity.

LinkedIn: https://www.linkedin.com/in/ramon-villarin/

Portfolio Site: MonVillarin.com

Github Project Repo: https://github.com/kurokood/chatbot-with-amazon-lex