Traditional 3-Tier Website Deployment on AWS: A Real-World Case Study

Introduction

In the world of web application architecture, few patterns have stood the test of time like the traditional 3-tier architecture. This time-tested approach separates applications into three distinct layers: presentation, application, and database. Each layer serves a specific purpose and can be scaled, secured, and maintained independently.

What is 3-Tier Architecture?

The 3-tier architecture consists of:

Presentation Layer (Tier 1): The user interface and user experience components

Application Layer (Tier 2): The business logic and application processing

Database Layer (Tier 3): Data storage and management

This separation of concerns provides several key benefits:

Scalability: Each tier can be scaled independently based on demand

Security: Layers can be isolated with different security controls

Maintainability: Changes to one layer don't necessarily impact others

Flexibility: Different technologies can be used for each layer

Why AWS for 3-Tier Architecture?

Amazon Web Services (AWS) provides an ideal platform for deploying 3-tier architectures due to its:

Comprehensive Service Portfolio: From compute (EC2) to managed databases (RDS) to load balancing (ALB)

Global Infrastructure: Multiple regions and availability zones for high availability

Security Features: VPCs, security groups, and IAM for granular access control

Managed Services: Reduce operational overhead with services like RDS and EFS

Cost Optimization: Pay-as-you-go pricing with various instance types and storage options

In this case study, I'll walk you through how I built a production-ready WordPress hosting infrastructure on AWS using Terraform, implementing a traditional 3-tier architecture that's both secure and scalable.

Project Overview

The Challenge

I needed to create a robust, scalable WordPress hosting solution that could:

Handle Variable Traffic: Support both low and high traffic periods

Ensure High Availability: Minimize downtime through redundancy

Maintain Security: Protect against common web vulnerabilities

Enable Easy Management: Allow for straightforward updates and maintenance

Support Growth: Scale resources as the website grows

Project Goals

The primary objectives for this infrastructure project were:

Scalability: Design an architecture that can grow with demand

Security: Implement defense-in-depth security principles

High Availability: Deploy across multiple Availability Zones

Cost Efficiency: Use appropriate instance sizes and managed services

Maintainability: Create clean, modular infrastructure code

Production-Ready: Include monitoring, backup, and disaster recovery considerations

Technology Stack

Infrastructure as Code: Terraform for reproducible deployments

Cloud Platform: Amazon Web Services (AWS)

Application: WordPress (PHP-based CMS)

Database: MySQL via Amazon RDS

Web Server: Apache/Nginx on Amazon Linux 2

Storage: Amazon EFS for shared file storage

Architecture Details

Let me break down each layer of the architecture and explain the design decisions behind each component.

Networking Layer: The Foundation

The networking layer forms the foundation of our 3-tier architecture, providing the secure, isolated environment where our application will run.

Virtual Private Cloud (VPC)

VPC CIDR: 10.0.0.0/16

- Provides isolated network environment

- Enables custom routing and security policies

- Supports both IPv4 and IPv6 (if needed)

Subnet Strategy

I implemented a multi-AZ subnet strategy for high availability:

Public Subnets (2 AZs):

10.0.1.0/24(us-east-1a)10.0.2.0/24(us-east-1b)Host: Application Load Balancer, NAT Gateways

Direct internet access via Internet Gateway

Private Application Subnets (2 AZs):

10.0.11.0/24(us-east-1a)10.0.12.0/24(us-east-1b)Host: EC2 web servers, EFS mount targets

Internet access via NAT Gateways

Private Database Subnets (2 AZs):

10.0.21.0/24(us-east-1a)10.0.22.0/24(us-east-1b)Host: RDS database instances

No direct internet access

Internet Connectivity Components

Internet Gateway: Provides internet access to public subnets NAT Gateways: Enable outbound internet access for private subnets (for updates, patches) Elastic IPs: Static IP addresses for NAT Gateways Route Tables: Direct traffic flow between subnets and gateways

This networking design ensures that:

Web servers can receive updates but aren't directly accessible from the internet

Database servers are completely isolated from internet access

Load balancers can distribute traffic from the internet to private web servers

Application Layer: The Processing Engine

The application layer handles all business logic and serves as the bridge between users and data.

EC2 Instances

I deployed two EC2 instances across different Availability Zones:

Instance Configuration:

- Type: t3.medium (2 vCPU, 4 GB RAM)

- AMI: Amazon Linux 2

- Storage: 20 GB GP3 EBS volumes

- Placement: Private application subnets

Why t3.medium?

Burstable performance for variable WordPress workloads

Cost-effective for small to medium websites

Sufficient resources for WordPress + MySQL client + web server

Application Load Balancer (ALB)

The ALB serves as the entry point for all web traffic:

Features Implemented:

Health Checks: Monitors

/healthendpoint on web serversCross-AZ Load Balancing: Distributes traffic across both availability zones

Sticky Sessions: Can be enabled for applications requiring session affinity

SSL Termination: Ready for HTTPS certificate attachment

Target Groups:

Health check path:

/Health check interval: 30 seconds

Healthy threshold: 2 consecutive successful checks

Unhealthy threshold: 5 consecutive failed checks

Security Groups: Network-Level Firewalls

I implemented five distinct security groups following the principle of least privilege:

ALB Security Group:

Inbound: HTTP (80), HTTPS (443) from 0.0.0.0/0

Outbound: All traffic to 0.0.0.0/0

WebServer Security Group:

Inbound: HTTP (80), HTTPS (443) from ALB Security Group

Inbound: SSH (22) from SSH Security Group

Outbound: All traffic to 0.0.0.0/0

Database Security Group:

Inbound: MySQL (3306) from WebServer Security Group only

Outbound: All traffic to 0.0.0.0/0

EFS Security Group:

Inbound: NFS (2049) from WebServer Security Group

Inbound: NFS (2049) from self (for mount targets)

Outbound: All traffic to 0.0.0.0/0

SSH Security Group:

Inbound: SSH (22) from 0.0.0.0/0 (restrict in production)

Outbound: All traffic to 0.0.0.0/0

Database Layer: The Data Foundation

The database layer provides persistent, reliable data storage for the WordPress application.

Amazon RDS MySQL

I chose RDS over self-managed MySQL for several reasons:

Configuration:

Engine: MySQL 8.0

Instance Class: db.t3.micro

Storage: 20 GB GP2 (expandable)

Multi-AZ: Enabled for production

Backup Retention: 7 days

Maintenance Window: Sunday 3:00-4:00 AM UTC

Benefits of RDS:

Automated Backups: Point-in-time recovery up to 35 days

Multi-AZ Deployment: Automatic failover for high availability

Automated Patching: OS and database patches applied automatically

Monitoring: CloudWatch metrics and Performance Insights

Security: Encryption at rest and in transit options

Amazon EFS: Shared File Storage

WordPress requires shared storage for themes, plugins, and media uploads when running multiple instances.

EFS Configuration:

Performance Mode: General Purpose

Throughput Mode: Provisioned (if needed)

Storage Class: Standard

Encryption: At rest and in transit

Mount Targets: One per AZ in private subnets

Why EFS over EBS:

Shared Access: Multiple EC2 instances can mount simultaneously

Automatic Scaling: Storage grows and shrinks automatically

High Availability: Built-in redundancy across AZs

POSIX Compliance: Works seamlessly with WordPress file operations

Terraform Implementation

One of the key decisions in this project was organizing the Terraform code into reusable modules rather than creating all resources in a single configuration file.

Module Structure Strategy

I organized the infrastructure into five distinct modules:

modules/

├── networking/ # VPC, subnets, gateways, routing

├── security/ # Security groups and network ACLs

├── database/ # RDS instance and subnet groups

├── storage/ # EFS file system and mount targets

└── compute/ # EC2 instances, ALB, target groups

Benefits of Modular Approach

1. Reusability

# Can be reused across environments

module "networking" {

source = "./modules/networking"

environment = "production" # or "staging", "dev"

vpc_cidr = "10.0.0.0/16"

region = "us-east-1"

}

2. Maintainability

Each module has a single responsibility

Changes to networking don't affect database configuration

Easier to troubleshoot and debug issues

3. Testing

Individual modules can be tested in isolation

Faster development cycles

Reduced blast radius for changes

4. Team Collaboration

Different team members can work on different modules

Clear ownership boundaries

Easier code reviews

Variable Management Strategy

Each module includes comprehensive variable validation:

variable "vpc_cidr" {

description = "CIDR block for VPC"

type = string

validation {

condition = can(cidrhost(var.vpc_cidr, 0))

error_message = "VPC CIDR must be a valid IPv4 CIDR block."

}

}

Resource Naming Convention

I implemented a consistent naming strategy across all resources:

Format: {environment}-{project}-{resource-type}

Examples:

- dev-wordpress-vpc

- prod-wordpress-alb-sg

- staging-wordpress-rds

This naming convention provides:

Environment Identification: Clear separation between dev/staging/prod

Resource Grouping: Easy filtering in AWS console

Cost Tracking: Simplified cost allocation by environment

Deployment Process

The deployment process is designed to be straightforward and repeatable across different environments.

Prerequisites Setup

Before deploying, ensure you have:

# Install Terraform

curl -fsSL https://apt.releases.hashicorp.com/gpg | sudo apt-key add -

sudo apt-add-repository "deb [arch=amd64] https://apt.releases.hashicorp.com $(lsb_release -cs) main"

sudo apt-get update && sudo apt-get install terraform

# Configure AWS CLI

aws configure

# Enter your Access Key ID, Secret Access Key, Region, and Output format

Step-by-Step Deployment

1. Clone and Initialize

git clone <repository-url>

cd wordpress-aws-infrastructure

terraform init

The terraform init command:

Downloads required provider plugins (AWS)

Initializes the backend for state storage

Prepares the working directory

2. Plan the Deployment

terraform plan -out=tfplan

This command:

Shows exactly what resources will be created

Validates the configuration syntax

Checks for potential issues before applying

3. Apply the Infrastructure

terraform apply tfplan

The apply process typically takes 10-15 minutes and creates approximately 25-30 AWS resources.

4. Verify Deployment

# Check ALB DNS name

terraform output alb_dns_name

# Test connectivity

curl http://$(terraform output -raw alb_dns_name)

Environment-Specific Deployments

For different environments, modify the local variables in main.tf:

# Development Environment

locals {

environment = "dev"

project = "wordpress"

}

# Production Environment

locals {

environment = "prod"

project = "wordpress"

}

Challenges & Lessons Learned

Building this infrastructure taught me several valuable lessons about AWS networking, security, and Terraform best practices.

Challenge 1: NAT Gateway vs Internet Gateway Routing

The Problem: Initially, I struggled with understanding when to use NAT Gateways versus Internet Gateways and how to properly configure route tables.

The Solution:

Internet Gateway: Provides bidirectional internet access for public subnets

NAT Gateway: Provides outbound-only internet access for private subnets

Route Table Configuration:

# Public subnet route table

resource "aws_route" "public_internet_access" {

route_table_id = aws_route_table.public.id

destination_cidr_block = "0.0.0.0/0"

gateway_id = aws_internet_gateway.main.id

}

# Private subnet route table

resource "aws_route" "private_internet_access" {

route_table_id = aws_route_table.private.id

destination_cidr_block = "0.0.0.0/0"

nat_gateway_id = aws_nat_gateway.main.id

}

Lesson Learned: Draw network diagrams before implementing. Understanding traffic flow is crucial for proper routing configuration.

Challenge 2: Database Connectivity from Private Subnets

The Problem: EC2 instances in private subnets couldn't connect to the RDS database, even though both were in private subnets.

The Root Cause: Security group rules weren't properly configured to allow MySQL traffic between the web servers and database.

The Solution:

# Database security group allows MySQL from web servers

resource "aws_security_group_rule" "database_mysql_from_webserver" {

type = "ingress"

from_port = 3306

to_port = 3306

protocol = "tcp"

source_security_group_id = aws_security_group.webserver.id

security_group_id = aws_security_group.database.id

}

Lesson Learned: Security groups act as virtual firewalls. Always test connectivity between tiers and use security group references instead of CIDR blocks for internal communication.

Challenge 3: EFS Mount Target Placement

The Problem: EFS mount targets were initially created in public subnets, causing connectivity issues from EC2 instances in private subnets.

The Solution: Mount targets must be in the same subnets as the EC2 instances that will access them:

resource "aws_efs_mount_target" "main" {

count = length(var.private_app_subnet_ids)

file_system_id = aws_efs_file_system.main.id

subnet_id = var.private_app_subnet_ids[count.index]

security_groups = [var.efs_security_group_id]

}

Lesson Learned: Understand AWS service networking requirements. Not all services work the same way across subnets.

Challenge 4: Managing Terraform State

The Problem: Initially stored Terraform state locally, which caused issues when working from different machines and made collaboration difficult.

The Solution: Implemented remote state storage with S3 backend:

terraform {

backend "s3" {

bucket = "my-terraform-state-bucket"

key = "wordpress/terraform.tfstate"

region = "us-east-1"

}

}

Lesson Learned: Always use remote state storage for any infrastructure that will be maintained long-term or by multiple people.

Challenge 5: Resource Dependencies

The Problem: Terraform sometimes tried to create resources before their dependencies were ready, causing deployment failures.

The Solution: Explicit dependency management:

module "compute" {

source = "./modules/compute"

# ... other variables ...

depends_on = [

module.networking,

module.security,

module.database,

module.storage

]

}

Lesson Learned: While Terraform is good at inferring dependencies, explicit depends_on declarations prevent race conditions in complex deployments.

Conclusion

Building this traditional 3-tier WordPress infrastructure on AWS using Terraform has been an invaluable learning experience that demonstrates the power and flexibility of cloud-native architectures.

Key Benefits of This Approach

1. Proven Architecture Pattern The 3-tier architecture has been battle-tested in enterprise environments for decades. It provides:

Clear separation of concerns

Independent scaling capabilities

Well-understood security boundaries

Straightforward troubleshooting paths

2. AWS Managed Services Integration By leveraging AWS managed services like RDS and EFS, we achieved:

Reduced operational overhead

Built-in high availability and backup capabilities

Automatic security patching

Cost optimization through right-sizing

3. Infrastructure as Code Benefits Using Terraform provided:

Reproducible deployments across environments

Version-controlled infrastructure changes

Automated resource provisioning

Consistent configuration management

4. Security Best Practices The implementation follows AWS security best practices:

Defense in depth with multiple security layers

Principle of least privilege for access controls

Network isolation between tiers

Encrypted data storage and transmission

When to Use This Architecture

This traditional 3-tier approach is ideal for:

Legacy Application Migrations: Moving existing applications to the cloud

Predictable Workloads: Applications with consistent traffic patterns

Compliance Requirements: Environments requiring specific security controls

Team Familiarity: Organizations with traditional infrastructure expertise

Cost Predictability: Workloads where reserved instances provide cost benefits

Limitations and Considerations

However, this approach may not be optimal for:

Highly Variable Traffic: Serverless might be more cost-effective

Microservices: Container orchestration platforms like EKS might be better

Global Applications: CDN and edge computing solutions should be considered

Event-Driven Workloads: Lambda and event-driven architectures might be more suitable

Next Steps and Evolution

While this 3-tier architecture serves as an excellent foundation, there are several directions for future enhancement:

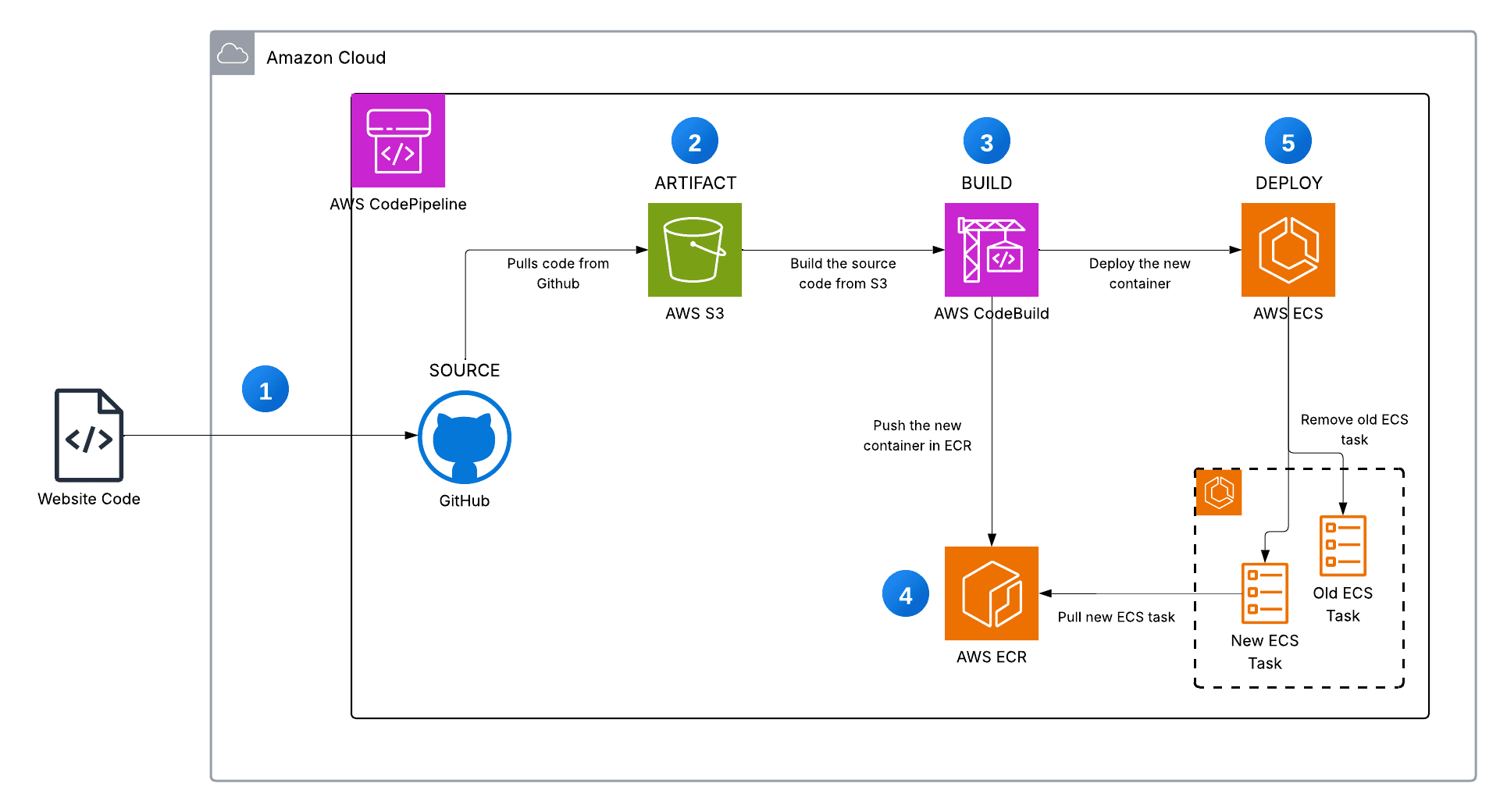

1. Containerization

Current: EC2 instances with traditional deployment

Future: ECS or EKS with containerized WordPress

Benefits: Better resource utilization, easier scaling, improved deployment processes

2. Serverless Components

Current: Always-on EC2 instances

Future: Lambda functions for specific tasks (image processing, backups)

Benefits: Pay-per-use pricing, automatic scaling, reduced operational overhead

3. Advanced Monitoring and Observability

Current: Basic CloudWatch metrics

Future: Comprehensive monitoring with CloudWatch, X-Ray, and custom dashboards

Benefits: Better performance insights, proactive issue detection, improved troubleshooting

4. CI/CD Pipeline Integration

Current: Manual Terraform deployments

Future: Automated deployments with GitHub Actions or AWS CodePipeline

Benefits: Faster deployment cycles, reduced human error, consistent environments

5. Multi-Region Deployment

Current: Single region deployment

Future: Multi-region setup with Route 53 health checks

Benefits: Disaster recovery, improved global performance, higher availability

Final Thoughts

The traditional 3-tier architecture remains a solid choice for many web applications, especially when implemented with modern cloud services and infrastructure as code practices. This project demonstrates that you don't always need the latest serverless or microservices architecture to build robust, scalable applications.

The key is understanding your requirements, constraints, and team capabilities, then choosing the architecture that best fits your specific situation. Sometimes, the tried-and-true approach is exactly what you need.

Whether you're migrating legacy applications to the cloud, building new traditional web applications, or simply learning cloud architecture patterns, the 3-tier approach provides a solid foundation that can evolve with your needs over time.

The infrastructure code and deployment process I've shared here can serve as a starting point for your own projects, and the lessons learned can help you avoid common pitfalls when building similar architectures.

Remember: great architecture isn't about using the newest technology—it's about solving real problems with reliable, maintainable, and cost-effective solutions.

Have you built similar architectures? What challenges did you face? I'd love to hear about your experiences in the comments below.

Tags: #AWS #Terraform #3TierArchitecture #WordPress #CloudInfrastructure #InfrastructureAsCode #DevOps

LinkedIn: linkedin.com/in/ramon-villarin

Portfolio Site: MonVillarin.com

Github Project Repo: https://github.com/kurokood/traditional_3_tier_website_deployment_on_aws